The 30 minutes a manager spends preparing their team before an Excel training session is the single biggest variable in whether the session sticks. Walk into the room with a prepared team and an unprepared instructor will still produce useful retention. Walk in with a polished instructor and an unprepared team and the day evaporates by Wednesday. This post is what the prep actually looks like, what to do during the session, and how to measure whether it worked.

Quick Answer

Excel training preparation is a 30-minute manager task done a week before the session. It involves identifying the team’s actual Excel pain (not the generic syllabus), asking attendees to bring a real spreadsheet they’re stuck on, and clearing the calendar so the team isn’t half-paying attention to email. None of those steps require IT or budget. All three are commonly skipped.

The payoff is measurable in week-five productivity, not in the immediate “did everyone enjoy the day” survey.

The hidden cost of unprepared training days

Unprepared Excel training is not a free experiment. The cost shows up in three places:

- Direct cost. Six attendees × one day × loaded hourly rate is typically $4,000–$8,000 in salary alone. The training fee is on top of that. If the day produces no behavioural change, the entire spend is sunk.

- Opportunity cost. The deadlines that slipped while the team was in training don’t disappear; they queue up for next week. Without a prep step, the team returns to the queue, applies almost nothing they learned, and the new skills atrophy.

- Cultural cost. A training day that doesn’t translate into real work tells the team that learning days don’t count. The next training booking gets harder; the budget is harder to defend; the cycle continues.

The 30-minute prep changes which side of that equation the day lands on.

A practical 30-minute prep model for managers

One week before the session:

- Five minutes — write down the three Excel tasks your team most often gets stuck on. Not generic (“VLOOKUP”); specific (“the monthly revenue rollup that breaks every time we add a new region”). The instructor can tune around real problems if they have them in advance; they cannot guess.

- Ten minutes — email the attendees. Three sentences, no calendar attachment: “Bring one spreadsheet you’re actively struggling with. We’ll work on it during the session. The instructor will not show your data to anyone else.” That’s it. The instruction works.

- Five minutes — block the day on every attendee’s calendar. Decline conflicting meetings on their behalf. Set their Slack/Teams status to “in training, will reply tomorrow.” Half-attention training is no training.

- Ten minutes — book a 15-minute follow-up meeting on each attendee’s calendar for the first business day after training. The agenda is a single question: “What’s the first thing from yesterday you want to apply to your work this week?”

That’s the prep. Total cost: 30 minutes of the manager’s time and zero budget. The follow-up meetings are where the magic happens — they’re the difference between “the team did the training” and “the team uses the skills.”

What to do during the session so learning sticks

Three behaviours that double the retention rate at week five:

- Attendees work on their real spreadsheet. The one they brought from the prep step. Ten minutes of solving an actual work problem beats two hours of solving a generic example.

- The manager attends, and is the worst at it. Counterintuitive but consistent: when the manager is visibly learning alongside the team, the team learns harder. When the manager skips because “I already know this,” the team treats the day as optional.

- Notes go to a shared space, not individual notebooks. A team SharePoint, a OneNote, a shared Google Doc — the artifact has to outlive the session. The notebooks always end up in a drawer.

The first five business days after training

This is where most training programs lose their ROI. The five-day window is when the new skill is fragile and most easily reinforced or abandoned. A practical pattern:

- Day 1. The 15-minute follow-up meeting from prep step 4. One question: what are you applying first? The answer drives the rest of the week.

- Day 2. Each attendee picks one real task from their queue and applies the new technique to it. Manager checks in for five minutes, no judgement, just “how did it go?”.

- Day 3. A 30-minute team meeting where each attendee shows the room one thing they did differently this week. This is where the cross-pollination happens.

- Day 4. Manager identifies one process the team did manually before training that the new skill could automate. Assigns it to the attendee most enthusiastic about that technique.

- Day 5. Friday wrap. What stuck, what didn’t, what’s the request for next training. The data feeds the next budget cycle.

That five-day rhythm is what turns the training spend into a process change. Without it, the spend produces a survey result.

How to measure whether the training worked

The completion rate is not a measure of training success. The metrics that actually answer the question:

- Time-to-completion on a recurring task measured before and after. If the monthly revenue rollup took three hours and now takes 90 minutes, that’s a measurable training outcome.

- Self-reported confidence on the specific tasks targeted by the training. A 1-5 scale, taken before and four weeks after. The lift is real if it’s there.

- Number of new techniques applied to real work in the first 30 days. Counted from the day-1 follow-ups and day-3 sharing meetings.

- Manager’s qualitative read. The least scientific metric and the most accurate. Did the team’s work get noticeably better?

Common failure patterns to avoid

- Buying the syllabus instead of designing the day. A canned curriculum is a starting point. The day succeeds when the instructor can adapt to the team’s actual stuck-points — which means you need a prep step that surfaces them.

- Treating onsite vs virtual as a budget decision. It’s a learning-style decision. Virtual works for technique training; onsite wins for confidence and team dynamics. Pick by what the team needs, not by the travel budget.

- Training the team without training the manager. Inevitable backsliding when the manager doesn’t know enough to coach the new techniques into the team’s daily work.

- Skipping the follow-up meetings. The cheapest part of the program and the part most often cut. Skip these and you’ve spent the training budget for the survey result.

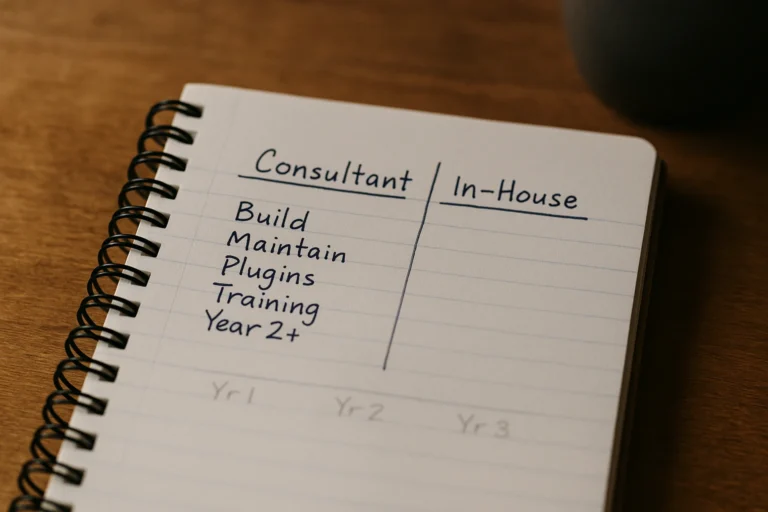

When to bring in someone outside

Most teams can run prep, training, and reinforcement themselves with the model above. Where outside help saves real money:

- The team’s Excel pain is technique-specific (PivotTables, Power Query, advanced formulas) and the manager can’t tune the syllabus alone.

- You’re rolling out training across multiple departments and the consistency matters — needs a coordinated curriculum and a measurable outcome by department.

- Past training spend hasn’t produced measurable behaviour change, and the next budget cycle needs a different approach.

If you’re booking another training session for the same team and the last one’s outcomes were never measured, you’re solving the wrong problem. A trainer with delivery experience and a measurement framework will land different results in the same time, with the same budget, on the same team.

Last reviewed May 16, 2026.

Rate And Review This Content

Be the first to rate this. Submissions are reviewed before they appear.